Miggo’s execution-first approach tackles AI threats, providing over 70% coverage across OWASP Top 10 for LLM Applications and Top 10 for Agentic Applications.

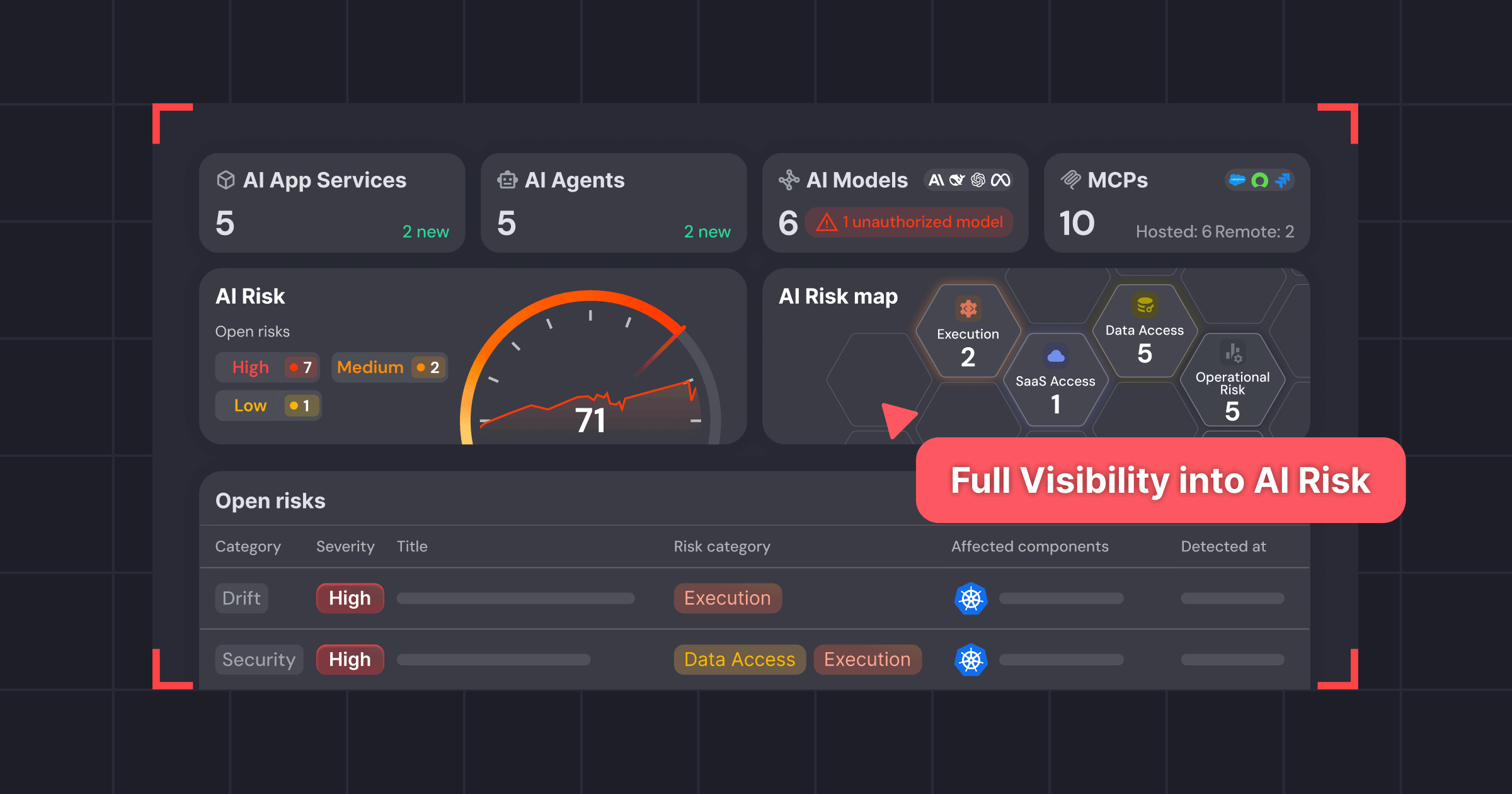

RSA CONFERENCE, San Francisco, March 24, 2026 — Miggo Security, a pioneer in Application Detection and Response (ADR) and AI Security, today announced a major expansion of its Runtime Defense Platform. The release includes AI Bill of Materials (AI-BOM), runtime guardrails, and Agentic Detection & Response (AIDR & Agentic DR) to give security teams unprecedented visibility and control over AI agents, Model Context Protocol (MCP) toolchains, and Shadow AI running in production.

With the enabling of vibe coding technology like Claude Code, organizations will be faster at building environments that will need even more security, of a very specific nature. The attack surface for AI and agentic apps lives in production, not in the codebase. AI components and agents dynamically select models, invoke tools, and access data at runtime, and all the traditional security controls can no longer accurately defend the non-deterministic nature and its true attack surface. Organizations will need Miggo’s Runtime Defense Platform for Applications, AI, and Agents to gain visibility into the actions the model or agent is doing, as well as a way to detect and block in real-time any malicious actions.

This announcement follows Miggo Security’s recent research on indirect prompt injection in Google Gemini integrations, which demonstrated how a weaponized calendar invite could influence downstream AI behavior through trusted context. This research highlighted a growing execution gap in modern AI security.

To close this gap, Miggo is shifting the security focus from prompts to execution behavior. Using its patented DeepTracing™ technology, Miggo delivers runtime truth by continuously proving what AI agents exist, how they behave, what they can access, and how their behavior changes over time based on actual execution evidence.

“AI risk materializes at runtime,”

asserts Daniel Shechter, CEO of Miggo Security.

“For teams using popular agent frameworks, like LangChain, and MCP-connected toolchains, this architecture makes runtime execution the primary attack surface. I'm proud of the technology we've built at Miggo, which has always been centered around deep context – and by extending our patented DeepTracing capabilities, we're now bringing robust AI and agentic defense directly into modern environments.”

Key capabilities of the expanded Runtime Defense Platform for Applications, AI, and Agents include:

- AI-BOM Discovery and Execution Visibility: Automatically discovers AI components across applications, MCP toolchains, and agent runtimes to map reasoning and execution paths. It continuously uncovers models, tools, and data access at runtime.

- Behavioral Drift Detection: Baselines agent behavior and highlights changes over time with full security context.

- Runtime Guardrails: Allows security teams to enforce allowed models, tools, and permissions by approving or rejecting detected drift.

- Execution-Level Detection for AI Agents: Traces tool calls, model and artifact loading, system actions, file access, and network behavior to pinpoint agent-driven compromise paths.

- MCP-Aware Monitoring for Toolchains: Monitors MCP-mediated tool use to detect abnormal access, risky chaining patterns, and high-impact execution paths.

- AI-Aware Application Protection: Extends Miggo’s WAF Copilot to AI-driven vulnerabilities by correlating AI functionality with runtime execution context to generate tailored detection and policy rules for missing guardrails and unintended exposures.

- Risk Scoring and End-to-End Attack Stories: Correlates events into a timeline and prioritizes risk based on real impact—such as blast radius, data access, and internet exposure—to accelerate triage and incident response.

- Compliance Support: Provides runtime evidence to support internal AI policies and emerging regulations such as the EU AI Act.

To learn more about this offering, visit.

We know you must have questions, here’s an FAQ for easy reference:

Q: What is it? Who needs it? What does it solve?

A: Our Runtime Defense Platform for AI, Applications, and Agents is needed by Platform Engineers, DevSecOps, and Security Architects whose organizations are deploying autonomous AI agents and models’ inference (e.g., LLMs) in production. It solves the critical gap of "Runtime Insecurity". protecting against new attack vectors like Agent Goal Hijacking, Prompt Injection, Model Serialization attacks (RCE), and Tool Misuse that traditional AppSec tools miss.

Q: Do you have an example?

A: This is basically EDR for AI applications. We had a dev download a sketchy model from Hugging Face yesterday that tried to open a reverse shell on our prod cluster- Miggo saw the pickle execution and killed it instantly before it could do anything. It’s the only thing that actually sees what these models and agents are doing under the hood.

Q: Why would a CISO tell this to his team?

A: Organizations are letting AI agents talk to their databases and execute code, and right now, they’re flying blind. The current AI gateway stops bad words, but they can't stop a hijacked agent from wiping a server. Miggo gives the control to deploy these agents without being the company that got hacked by its own chatbot. It secures the runtime, not just the chat window.

Q: How is this different from the existing RCE detection capability?

A: Standard RCE detection looks for code execution that shouldn't happen at all (like a web server suddenly spawning a shell). This release adds the AI Context. In Agentic apps, the “execution” is a feature, not a bug. Agents are supposed to call tools. The new engine distinguishes between an Agent using a tool legitimately and an Agent that has been hijacked via prompt injection. It also specifically targets Model Supply Chain attacks (like malicious code in model files) which standard RCE detector may miss.

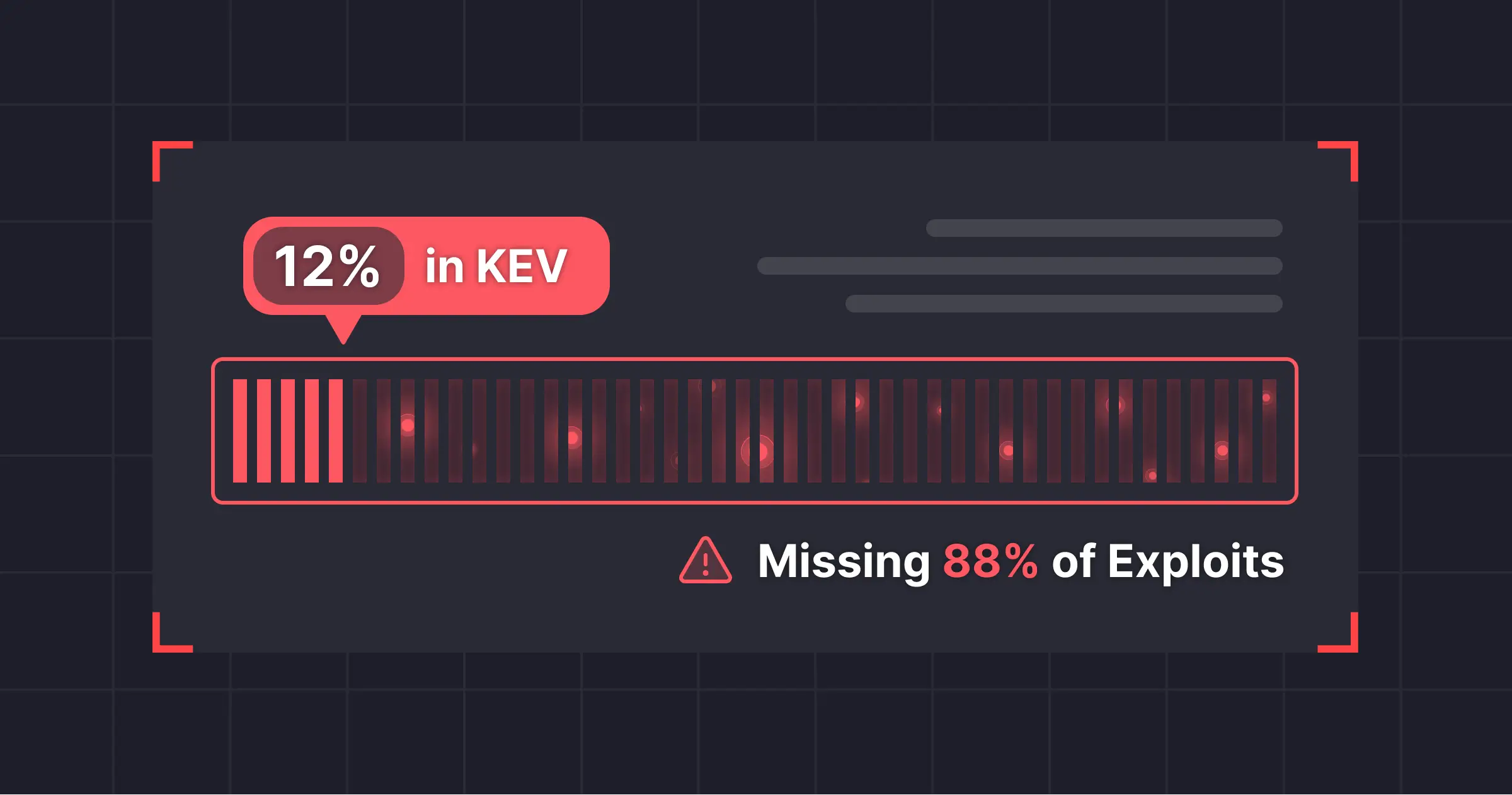

Q: How is this different from AI Firewalls or LLM Gateways?

A: Current solutions like AI Firewalls (WAFs) or LLM Gateways operate "Outside-In". They analyze text prompts and responses to identify patterns. They are blind to what happens inside the application (the code execution, the model loading, the tool chaining). Miggo is "Inside-Out." We see the application and OS runtime. We don't read the chat; we see the functions and traces. We know if a model is executing a pickle bomb, or if an agent is using a legitimate tool to access a database it has never touched before. We enable teams to identify what’s wrong and stop harmful actions.

Q: What of OWASP Top-10 for LLM and OWASP Top-10 for Agentic Applications?

A: We cover 7 out of 10 in both categories, focusing on the ones that actually break things. Instead of minor policy issues, we stop the critical runtime threats:

- Supply Chain: When a downloaded model contains a malicious code (Pickle/RCE).

- Prompt Injection from various inputs: When it actually changes code execution.

- Agent Hijacking: When an agent gets tricked into doing something it wasn't designed to do.

Learn more about how you can see and secure your AI attack landscape here.